How do you bring a fast PC to its knees? Developers have been doing this with music applications since the mid-80s. With the many real-time tasks a computer needs to execute to do even the simplest recording, audio processing and mixing it's accepted that an end-user needs a fast processor to do any kind of serious music productions. And, as soon as the latest high speed processors are delivered developers find ways to use every available cycle.

Today's lesson is from Andrew Souter and the folks at 2CAudio. Their new Kaleidoscope plug-in takes a lot of horsepower to run, but it does some truly amazing things too...

Are you a musician?

Yes, absolutely. I am a pianist, composer, sound-designer and producer. I've done dance music releases on Paul Oakenfold's Perfecto label, Cyber Records in Amsterdam that is now part of Armada, and a few US labels. I've worked on scores for TV commercials for brands including Discovery, History, TLC, and games with Centropolis Entertainment and Sonic Mayhem in Los Angeles. Last year I started my own record label and publishing company and I released the first installment of a solo-piano trilogy, and an ambient album. I am now completing the second solo-piano album. Piano is a true passion of mine.

Who were the artists that originally inspired you in electronic music?

Initially I listened to a lot of ambient music from artists such as Robert Rich – whom I recently had the honor to work with, Michael Stearns, Steve Roach, Brian Eno, Vangelis, and pretty much everything else that was showcased on the Hearts Of Space and Echoes radio programs here in the US.

Ambient electronic music eventually lead me to early IDM and glitch artists such as Aphex Twin, The Orb, Orbital, Prodigy, and then eventually "dance music proper" with artists from Perfecto, Platipus, Bedrock, Renaissance, and Global Underground records.

Sasha was a huge influence. I made monthly pilgrimages to Twilo Nightclub in New York City in the late-90s to see Sasha and Digweed play. It was truly a dream come true when I had the opportunity to work with him on Invol2ver, and his Moby and David Lynch remixes years later.

I also have always thoroughly enjoyed classical music - all of the minimalists of course like Phillip Glass, Steve Reich, and others as well as classical composers such as Bach, Chopin, Rimsky-Korsakov, and Tchaikovsky.

I understand you went to USC. How did you end up there?

I was very interested in music and technology at an early age and always I knew I wanted to have my own company. Part of me wanted to skip college and just start working in music and technology. I wanted to have new experiences, so I decided to go to school in California for both music and technology. I applied to Stanford because of the CCRMA, and to USC because it had one of best entrepreneur programs, film schools, and music industry programs. USC offered me an academic scholarship for computer science, but I switched to the USC entrepreneur program. The CompSci curriculum simply had no free time for electives, and I would not have had any time to do anything related to music. I knew that I wanted to have my own company eventually, so I figured I'd hire formal computer science people as needed.

So you wanted to make music technology products?

Originally I wanted to make technological music like electronic music, dance music, film-scores etc., but since math and science came pretty easily for me I gravitated to the technical aspects. I remember reading the MIDI specification book, and the Computer Music Tutorial in calculus class while still in high school. I guess I am a nerd at heart.

While in the entrepreneur program at USC I wrote a business plan for a company that would produce music software products based around the idea of algorithmic composition. The product would be half a game and half a professional tool. Some of this concept is showing up now in a different capacity in Kaleidoscope.

What inspired you regarding algorithmic composition?

There was an obscure computer music scripting language called Symbolic Composer written by a guy in Amsterdam named Peter Stone. It was text-based, written with LISP - a language used primarily for artificial intelligence research. I was in communication with Peter for a while about this idea.

Did your business get started immediately after USC or did you do something else and then say it's time to start my own business?

After USC I was doing more production and scoring kind of work initially. I did some TV commercials and scoring and sound-design for games, and I got involved with producing dance music. I spent a few months in Amsterdam and worked in a studio there together with the leaders of the prominent club and radio station of the time. This is what led to my initial dance music releases. I call it my "Harder, Faster" stage, since I was mostly involved with more ambient styles before this. It took a few years to get back into doing products in the context of the business plan.

What was your first trade show with the company?

It was the 2007 Remix Hotel event at the Miami Winter Music Conference. Everyone should experience it at least once. I have not gone for the past couple of years. It's a fun time. All of the hotels have events and pool parties, and there are events downtown at clubs like Space.

There's also the Ultra Music Festival, which was originally part of WMC. That, in and of itself, has become a gigantic event. It was originally like a one-day concert, then it morphed into a full weekend concert, and last year it was two full weekends.

Galbanum and 2CAudio: What's the difference?

Galbanum is a content creation company. It focuses on sound-design products, content libraries, services, and music releases. Galbanum products range from commercial loop libraries such as drum loops and synthesizer expansions, to the more advanced sound design resources in the Architecture Line that are designed to help other sound-designers and developers create new products.

2CAudio is an independent software development company. I started it with Denis Malygin in 2008 to develop audio signal processing software plug-ins focusing on spatialization, advanced creative effects, and other future-forward ideas. Our typical customers are people doing scoring and sound design for film, television, and games, electronic musicians, and any other artists who embrace a modern take on music production.

How did you hook up with Denis?

Denis had a previous company, Spin Audio that made a couple different products including a popular reverb RoomVerb. Denis did really great things at a very young age, but coming from Russia it is a little more difficult to gain notoriety in the music technology world, which is sad as there are many brilliant people in Russia.

Denis and I were born the same year in the middle of the cold war. We took a leap of faith and learned to work together to create products that neither one of us could accomplish on our own.

We like to think that 2CAudio is a good example of people going outside their comfort zone and having enough empathy and compassion to see the best in people. If anything can help to unify the world I think it will be art, music, science, technology, and perhaps space exploration.

Did you teach yourself how to code?

Yes, I know DSP theory, but Denis is actually the formal computer scientist of the two of us. I designed the mathematics and functionality of the algorithms that we use, almost exclusively in Kaleidoscope, and to a large degree in B2 as well. I answer what and why and Denis works out how to integrate it and make it as fast as possible, which is certainly not an easy task, as I often try to push things as far as absolutely possible. Thankfully Denis is a complete genius and we work very well together.

Do you do the UI stuff?

Yes, I do the layout, design, and implementation. Denis does the GUI logic and comes up with enhancements. And we have the help of an interface designer in Paris who has defined the general style of the interface elements we have used starting with Aether 1.5.

What was your very first product?

Galbanum Architecture Volume One, which is a content library for U&I Software's MetaSynth. MetaSynth was invented and coded by Eric Wenger, who also made Bryce, a generative fractal landscape animation program that was very popular in the '90s. Eric's work is very high-level and not for the technically faint of heart. AV1 was thus a content library for this very powerful, but somewhat esoteric program. My goal was to make it more accessible, so I made a library that explored the musical sonification of purposefully designed images.

MetaSynth is similar to Kaleidoscope in that it uses the "pictures to control sound" metaphor. It's easy to take a picture of something and use an additive synthesizer to make some weird sound, but that's only fun a couple of times. It can lose its novelty quickly and is not all that useful in a musical context. A big part of making this musically useful is thinking about purposeful organization in time and frequency.

You have all this data in the form of a picture that can generate 512 or 1024 different voices simultaneously, but musicians generally only have two hands and ten fingers so it's quite hard to play more than ten notes simultaneously unless you happen to be Vishnu or Doctor Manhattan. So what do you do with all of the complexity offered by hundreds or thousands of voices? I thought deeply about these types of topics.

So composers would take graphic images from the scores that they were doing and feed them into...

Well the better thing to do is actually to use images that are purposefully generated to have a musical effect. Music is organization in time and frequency, so you have to think about how to organize things in a musically meaningful way, rhythmically and harmonically. If you just have random data, it sounds like random data and it's not interesting really, unless you need a random sound effect for five seconds in your film or whatever.

Chaos...

And it's absolutely a useful tool to have chaos, but you don't want chaos all of the time. Music is kind of a balance of predictability and surprise.

Tension and release...

Yes! It's about finding a balance between predictability and surprise, and trying to find new ways to create new musical surprises. Often that involves pretty extreme sound design or different organizations in time and frequency. That's really the goal of a lot of my content libraries, and also Kaleidoscope.

At what point did you decide you could make a living out of this?

Well, there's living and then there's living comfortably. I could feed myself from the initial Galbanum projects, but the very first 2CAudio product, Aether won an EM Editor's Choice Award in 2010. It became a large commercial success for us, and remains that way today. We built our legacy from there, and 2CAudio has had almost all of my focus since 2009.

Where did the name, Aether, come from?

Aether comes from Greek mythology. It's sort of a fifth element, so to speak. Plato states in Timaeus "there is the most translucent kind [of air] which is called by the name of Aether" but it is really more subtle than that. It is more like the substrate or media of the spiritual or astral plane in esoteric philosophy.

Tell me about Kaleidoscope...

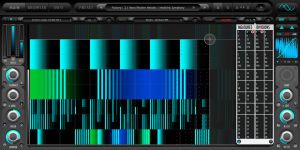

The three or four word sound-byte version is that it uses pictures to control sound. The technical description is that it is a massively parallel bank of resonators that can be tuned arbitrarily with scientific precision and modulated by over two millions points of automation. It makes radically cool sounds and is incredibly deep and diverse. It will take us all years to understand fully everything it is capable of. I think it may become of historical importance to electronic music and sound-design. 1.0 is just the first step.

Your first Galbanum libraries were constructed by this concept, now this is like the concept editor, so you're coming full circle with Kaleidoscope?

Absolutely! This is something I've wanted to do for a long time. We actually started working on it in 2010. I appropriated my original content from AV1 to be used with Kaleidoscope. That functions as the starting point. So it's exactly full circle, yes.

Who is it for?

Electronic musicians, film and game composers, and sound designers. And I mean that in all possible contexts of sound design: I think of Kaleidoscope as both a content generator and an effects processor. I used an early version of it helping Sonic Mayhem with his work on Dead Rising 3, the zombie video game for textural, horror movie-ish, dronish material. I also used it on a film score that Robert Rich is the music director and composer of. I've done an ambient album and some dance music with it as well.

Do you imagine it being used in a live setting with real-time controllers and such?

We don't think of it quite as a live performance tool quite yet. The CPU requirements for it are quite heavy, because it's a massively parallel algorithm, and there are extreme mathematics that go on behind the scenes. At the moment, adjusting knobs is sort of like setting up conditions, and smooth modulation is achieved from two different pictures. These can have different timings that can create textural things, polyrhythms, and other really unique sounds. We expect users will bounce or freeze results often in studio use.

In the ambient music world if you feed Kaleidoscope into B2 or Aether, which are designed to augment existing spatial cues, it creates instantly evolving soundscapes. You can have up to 512 different voices that can be tuned and spatialized dynamically so that they're moving throughout the sound field. You get this really incredible sense of motion and depth. "Spatial Choreography" is a good term to use.

You use a combination of the source material and Kaleidoscope's output?

You can, or you can let it generate a complete sound-field, sound-sculpture. You could argue that it's generating the composition in some cases if you're using musical tonality. AV1 has as thousands of scales and tonalities, including everything from five and seven note scales, chords, ethnomusicology constructs, harmonic tunings, and various atonal mathematical functions. Musical sound is a subset of all sound that exists in the universe, and even within the context of making a record, in modern electronic music for example, there are often single-hit sound-effects that do not need to be tonal.

How do you use it personally?

If I'm working on an existing song, like a remix project, the existing music will have a chord progression and it has its musical structure. In this capacity you can use Kaleidoscope as an orchestration tool to come up with new ways to augment the existing musical story. If you're using chordal tunings you would basically render the performance for each chord that you need to follow the chord progression of the song that you're working with.

You can also use it as a tool to kick-start the creative process. You can use a scale tuning and experiment with different image map performances that will generate new melodic and harmonic content. You can experiment until you find something you like, and then it can help guide the composition process. I'm not trying to replace human musicians in any capacity. I am a human musician myself and I love playing piano, but I'm not Lang Lang or Glenn Gould. Some things I'm simply not going to play because I don't have the muscle memory for it, or I don't have the listening experience. Music is very experiential. Kaleidoscope is designed to provide new experiences. If you are exposed to unusual harmonies and unusual melodic constructs, this can actually augment the creative process and can actually train you to start to play these things as well. And it can encourage you to explore different modalities that you may not have discovered otherwise.

Of course Kaleidoscope is also an FX processor and supplies all forms of novel filter and delay-based effects! It really is a trip.

Other Related News

Other Related News